METR’s new survey gives both sides of the AI productivity argument something to quote, which is why it is worth reading carefully.

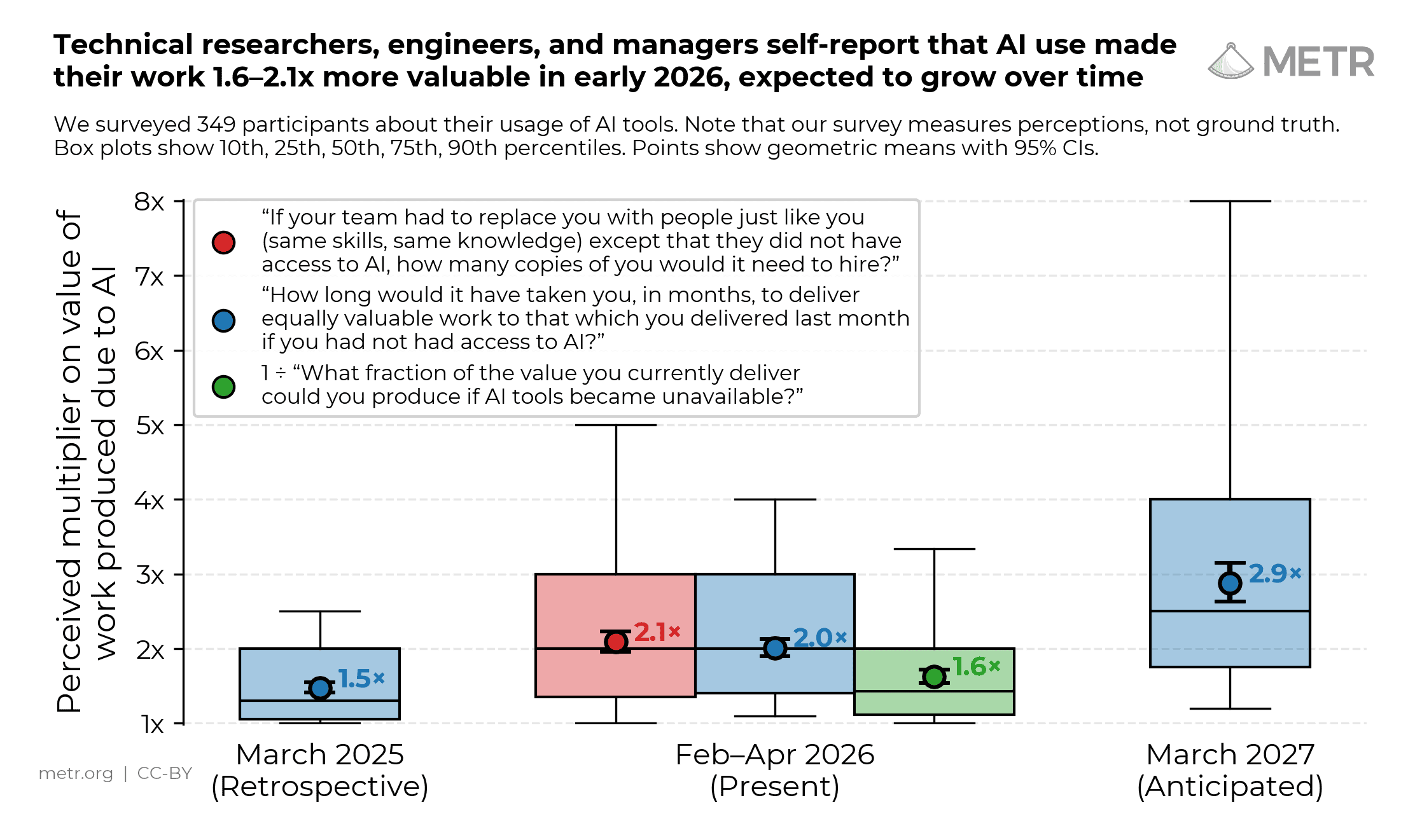

The research organisation surveyed 349 technical workers between February and April 2026, including 87 software engineers, 71 researchers, 129 academics and PhD students, and 48 founders and managers. Respondents reported substantial gains from AI tools: a median 1.4–2x change in the value of their work, and a median 3x speed change.

That headline sounds dramatic. METR’s own framing is more cautious.

The key distinction is between speed and value. A developer may use AI to produce a dashboard, refactor a low-priority module, or explore an idea that would previously have been too expensive. That can feel much faster than doing it manually. But it does not automatically mean the organisation created three times as much value. Some AI-assisted work shifts people into tasks that are now cheaper, not necessarily tasks that matter most.

METR says it tried to capture gains in terms of value — “how much more value are you creating with AI” — rather than only asking how long tasks would have taken without AI. It also notes that speed changes would typically overstate value changes.

The time trend is striking. Using the same wording, respondents retrospectively estimated 1.3x value of work in March 2025, estimated 2x in March 2026, and forecast 2.5x for March 2027. That suggests technical workers feel the tools improving quickly, especially as agentic coding systems become normal parts of workflows. METR’s sample also included experienced AI users: the page says respondents averaged 12 years of programming experience, 19 months using AI for programming, and seven months using agentic AI coding tools; 50% regularly used Claude Code.

But the caveats are not small print. METR writes that survey results are “not necessarily grounded in reality” and gives reasons to be sceptical of counterfactual productivity claims. It points to its own early-2025 study finding that people overestimated AI’s effect on time spent on tasks by 40 percentage points on average. It also notes that public survey estimates of productivity impacts have tended to be greater than estimates from field experiments or quasi-experiments.

For engineering leaders, that is the useful lesson. Do not ignore developer reports: if experienced technical workers consistently feel that AI changes their output, that matters. But do not manage the business on vibes. Measure by task type.

Agentic coding may be excellent for scaffolded tests, migrations, codebase search, small bug fixes, documentation, prototypes and papercut quality improvements. It may be weaker where requirements are ambiguous, domain context is tacit, or review cost eats the implementation gain. A single productivity multiplier across the whole team will hide more than it reveals.

METR’s survey is therefore less a verdict than a warning label. AI tools are clearly changing how technical workers experience their jobs. The next question is whether teams can turn that felt speed into durable, reviewable, customer-visible value.